Measure, Mend and Magnify GRM Effectiveness

“If you can't measure it, you can't improve it,” said Peter Drucker, one of the most renowned thinkers in the field of management. This principle applies even in our daily lives. An urban dweller wanting to save on her electricity bills would need to know how much electricity she is using before she can figure out when, where and how much to save. A manager would want to assess the performances of his supervisees to help them improve and better manage them. The same applies to the management of Grievance Redress Mechanisms (GRMs). There are numerous ways to improve the effectiveness of GRMs. To do so, we must first measure or assess the GRM’s current effectiveness so that needed improvements can be identified and developed. A fundamental aspect of this approach is that they need to be widely accepted measurement standards which can be used to quantify or assess.

In its recent publication “Remedy in Development Finance,” the United Nations Human Rights Office of the High Commissioner (OHCHR) provided practical guidance on effectively operating a GRM. Although it might be easily missed, one of the most useful resources provided in this publication is at the very end of the report, in Annex II. OHCHR provided an “assessment tool” that allows GRMs or Independent Accountability Mechanisms (IAMs) to evaluate their own effectiveness against the effectiveness criteria from the UN Guiding Principles on Business and Human Rights. There are eight effectiveness criteria that have come to be accepted by the international community as the gold standard for GRMs and IAMs. Against these eight criteria, there are 82 qualitative indicators developed by OHCHR to assess a mechanism’s effectiveness. It is a practical tool to help a GRM understand where to invest more of its resources in improving effectiveness. The IRM has embraced this assessment tool and undertook a self-assessment of its effectiveness using the indicators set out by OHCHR. The assessment was revealing and has allowed the IRM to find out areas for improvement (the IRM’s self-assessment report is available here).

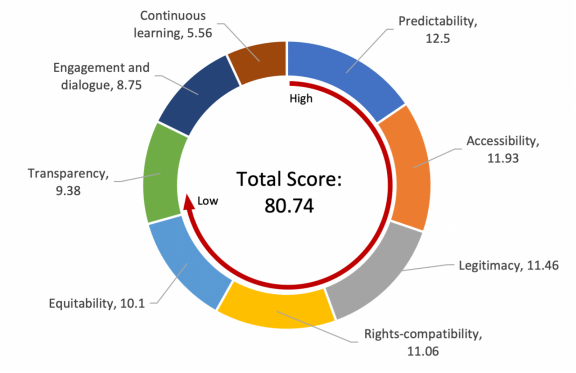

Because the OHCHR’s assessment tool uses qualitative criteria, the IRM had to develop its own methodology to quantify the qualitative information in the IRM’s self-assessment report. The IRM adopted a simple method for doing so. Together, the eight effectiveness criteria were given 100 points in total. Each of the eight criteria was given equal weight, resulting in 12.5 points per criterion (100/8). Similarly, indicators within a criterion were weighted equally. However, since each criterion has a different number of indicators, the 82 indicators developed by OHCHR could not be allocated the same weight. If a criterion had a large number of indicators, the weight of each indicator would decrease, as opposed to a criterion that had few indicators, where the weight of each indicator would increase. For example:

- Where criteria A has 10 indicators, each one would have a weight of 12.5/10= 1.25

- Where indicator B has 5 indicators, each one would have a weight of 12.5/5=2.5

The score given to each indicator was either: 1 (fully met), 0.5 (partially met) or 0 (not met). A detailed description of the methodology is available in the IRM’s self-assessment report. Overall, the IRM scored 80.74 points out of 100. Among the eight effectiveness criteria (Legitimacy; Accessibility; Predictability; Equitability; Transparency; Rights compatibility; Continuous learning; and Engagement and dialogue), it received a perfect score in predictability, and the next highest scores were in accessibility and legitimacy. The last two effectiveness criteria, continuous learning and engagement and dialogue, scored the lowest.

“Predictability” scored high thanks to the IRM’s continuous efforts to inform the complainants of the entire complaints handling process, from registration of complaints to monitoring and closing of cases. The IRM articulates the kinds of remedies that can result from the IRM’s processes and tries to collaborate with other IAMs to the extent possible. The IRM scored high on “accessibility” as well, mostly because it tries to reach out to as many people and civil society organisations as possible in the regions identified through the IRM’s prioritisation list. It also constantly endeavours to reduce access barriers for less privileged people and communities. Compared to many other mechanisms, the bar to file a complaint with the IRM is low in terms of the timeframe, evidentiary requirements, etc. However, the IRM has yet to develop specific strategies for other marginalised groups such as differently-abled people and can make further improvements in this regard. The IRM also needs to better assess to what extent its outreach efforts are getting critical information about the IRM to project affected people.

According to its self-assessment, the IRM is performing fairly well in terms of “legitimacy.” It is strictly independent of the GCF Secretariat and reports directly to the Board, at times through the Ethics and Audit Committee (EAC). The IRM staff are held to high standards of ethical conduct and are regularly trained to keep up with the good practices in the field of accountability. The IRM will continue to conduct annual stakeholder surveys to assess the needs of and build trust with its stakeholders. Furthermore, its assessment on “rights-compatibility” demonstrates that the IRM prioritises human rights and non-discrimination throughout its processes and conducts due diligence to prevent its stakeholders from any retaliation risks. The IRM can make further progress in making its processes more respectful, culturally sensitive and empowering for its stakeholders.

The IRM only partially met several indicators in the “equitability” criterion. The complainants are provided with advisory, technical and financial support, and they are given opportunities to offer comments in the complaints handling process. However, the IRM and the GCF can make the complaints handling process more rigorous by providing responses to complainants’ comments not taken on board. In addition, in 2022, the IRM is planning to train its staff on how to engage with complainants exposed to trauma. In terms of “transparency,” the IRM clearly defines its procedures and makes them available on its website. It also has a publicly available case register and provides regular updates to its stakeholders through annual reports and triannual newsletters. Additionally, during a complaints handling process, the IRM diligently and timely stays in contact with complainants regarding the status of the case. Including references to the history of IRM cases on the GCF management’s project pages could be one way to further enhance the transparency of the GCF and the IRM.

The “engagement and dialogue” criterion has only six indicators (one of which is a duplicated indicator and is not counted towards the scoring). The IRM failed to meet one indicator and partially met another indicator, leading to a lower total score for this criterion. The IRM has tried to share its experiences and lessons through blogs, newsletters, and advisories. It has also established and led the Grievance Redress and Accountability Mechanisms (GRAM) community of practice to share its experiences and learn from others’ experiences. In addition, although not mandatory, the IRM is open to receiving feedback from its stakeholders with regard to its policies, procedures, and practices. However, the IRM can do more to allow a wider group of stakeholders to engage in dialogues with the IRM, and both the GCF and the IRM can score better on this criterion as they build more institutional experience.

As a relatively new mechanism that has been in existence for just over five years, the IRM has come a long way with regard to fulfilling the eight effectiveness criteria. However, the indicators identified by OHCHR have allowed the IRM to assess itself against quantifiable data, and the areas needing improvement have become clearer for the IRM.

As a cautionary note, not all the indicators in the world can give a comprehensive picture of the functioning of a grievance mechanism – not even when all the indicators receive a full score. For example, even though the IRM had a perfect score on “predictability,” it does not mean that the IRM’s processes are completely predictable for all its stakeholders. Similarly, although the IRM scored very high on accessibility, the IRM recognises that there are still some limitations in providing easy enough access to its potential stakeholders and has hired a Communications Associate to strategically strengthen its accessibility. In addition, as the eight effectiveness criteria are closely interlinked and poor performance in one criterion can undermine the performance in other criteria, it is essential to keep a good balance among the criteria. For example, greater transparency could increase accessibility. A high accessibility score coupled with a low transparency score should tell a mechanism that the assessment is not giving a true picture. What the self-assessment tool does is to systematically help identify matters that need improvement and to trigger creative thinking around how those improvements might best be made.

Article prepared by Sue Kyung Hwang and Lalanath de Silva